It still boggles my mind that C# is as good as it is given where it comes from. Java really fucked up with type erasure and never fully recovered imo.

- 0 Posts

- 45 Comments

It’s funny, I’ve had an Android, a Nokia Windows Phone, and an iPhone, and Windows Phone was the only OS in which I didn’t open every single app through search. The utter lack of an app ecosystem definitely played a part, but I honestly don’t think either of the other two handle home screens/“app drawers” very well. Every modern social media platform/messenger/etc. is built around vertical continuous scrolling because it’s easier. Why is horizontal, paginated scrolling the default for home screens?

4·7 months ago

4·7 months agoThe benefits massively outweigh the risks when it comes to open source ad blockers (lets be honest, we’re all talking about uBO), but limiting your attack surface is a very widely practiced concept in cubersecurity, and there’s no situation where it is totally without merit.

1·7 months ago

1·7 months agoThat seems like a better fit for an intrinsic, doesn’t it? If it truly is a register, then referencing it through a (presumably global) variable doesn’t semantically align with its location, and if it’s a special memory location, then it should obviously be referenced through a pointer.

82·7 months ago

82·7 months agoI’ve never really thought about this before, but

const volatilevalue types don’t really make sense, do they?const volatilepointers make sense, sinceconstpointers can point to non-constvalues, butconstvalues are typically placed in read-only memory, in which case thevolatileis kind of meaningless, no?

1·7 months ago

1·7 months agoIt didn’t seem that different from like… tree fruit juice, but based on some of the comments I’ve gotten, it doesn’t sound like it would be very pleasant.

2·7 months ago

2·7 months agoGross as in it tastes bad raw?

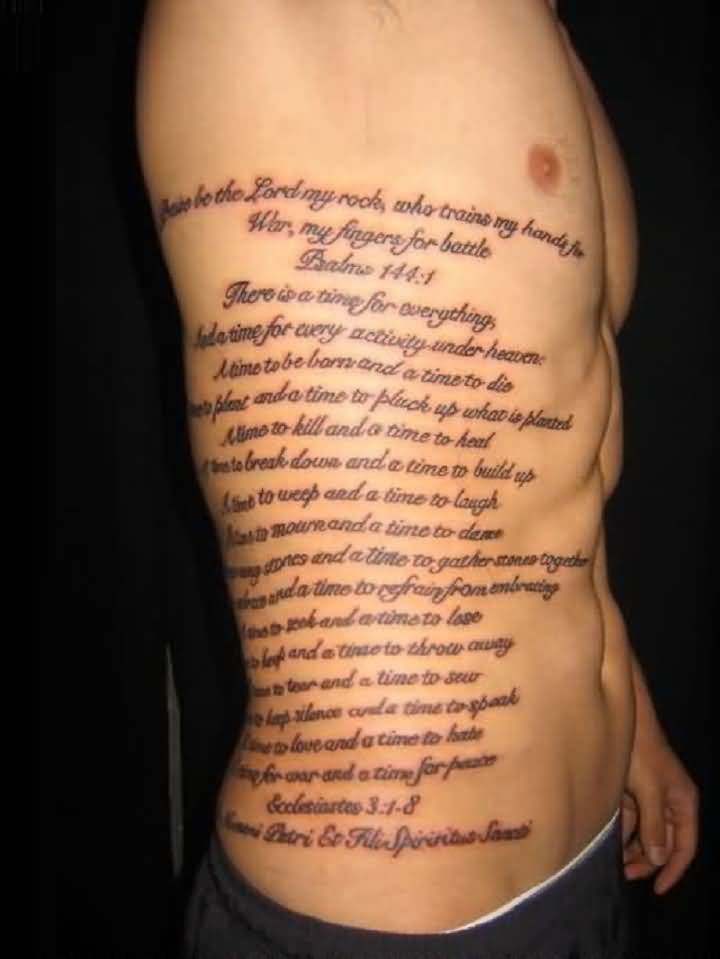

IPv8 tattoo

That defeats the brute-force attack protection…

The idea is that brute-force attackers will only check each password once, while real users will likely assume they mistyped and retype the same password.

The code isn’t complete, and has nothing to do with actually incorrect passwords.

I’ve used Java 21 pretty extensively, and it’s still comically bad compared to various alternatives, even apples-to-apples alternatives like C#. The only reason to use Java is that you’ve already been using Java.

IE6 era vibes

But… this is a nearly opposite situation, no? Microsoft added a bunch of their own shit with no attempt at standardization, and instead of simply not using those features, a ton of websites started making IE a hard requirement.

42·9 months ago

42·9 months agoObviously you can’t turn a person white so they probably mean the led.

This is true, but it still has to distinguish between facetious remarks and genuine commands. If you say, “Alexa, go fuck yourself,” it needs to be able to discern that it should not attempt to act on the input.

Intelligence is a spectrum, not a binary classification. It is roughly proportional to the complexity of the task and the accuracy with which the solution completes the task correctly. It is difficult to quantify these metrics with respect to the task of useful language generation, but at the very least we can say that the complexity is remarkable. It also feels prudent to point out that humans do not know why they do what they do unless they consciously decide to record their decision-making process and act according to the result. In other words, when given the prompt “solve x^2-1=0 for x”, I can instinctively answer “x = {+1, -1}”, but I cannot tell you why I answered this way, as I did not use the quadratic formula in my head. Any attempt to explain my decision process later would be no more than an educated guess, susceptible to similar false justifications and hallucinations that GPT experiences. I haven’t watched it yet, but I think this video may explain what I mean.

Edit: this is the video I was thinking of, from CGP Grey.

54·9 months ago

54·9 months agoI still don’t follow your logic. You say that GPT has no ability to problem solve, yet it clearly has the ability to solve problems? Of course it isn’t infallible, but neither is anything else with the ability to solve problems. Can you explain what you mean here in a little more detail.

One of the most difficult problems that AI attempts to solve in the Alexa pipeline is, “What is the desired intent of the received command?” To give an example of the purpose of this question, as well as how Alexa may fail to answer it correctly: I have a smart bulb in a fixture, and I gave it a human name. When I say,” “Alexa, make Mr. Smith white,” one of two things will happen, depending on the current context (probably including previous commands, tone, etc.):

- It will change the color of the smart bulb to white

- It will refuse to answer, assuming that I’m asking it to make a person named Josh… white.

It’s an amusing situation, but also a necessary one: there will always exist contexts in which always selecting one response over the other would be incorrect.

Legal money transfers are not a use case. Crypto is simply much more expensive to maintain. All these mining rigs and all that electricity must be paid for.

Various currencies are moving away from the proof-of-work model, FWIW. Ethereum was mentioned in this comment chain as one of them.

16·9 months ago

16·9 months agoWhat’s that, like 90+% of Android users?

31·9 months ago

31·9 months agoI’m… agreeing with you…

32·9 months ago

32·9 months agoDid it? Friendly reminder to all that the French Revolution was a failure.

There may be good examples out there, but I’d argue Atom isn’t one of them. VS Code was clearly intended to be a spiritual successor with MS branding IMO, it is a fork of Atom, and it is equally open source (MIT license).

https://ourworldindata.org/grapher/historical-cost-of-computer-memory-and-storage?time=2017..latest