The tool, called Nightshade, messes up training data in ways that could cause serious damage to image-generating AI models. Is intended as a way to fight back against AI companies that use artists’ work to train their models without the creator’s permission.

ARTICLE - Technology Review

ARTICLE - Mashable

ARTICLE - Gizmodo

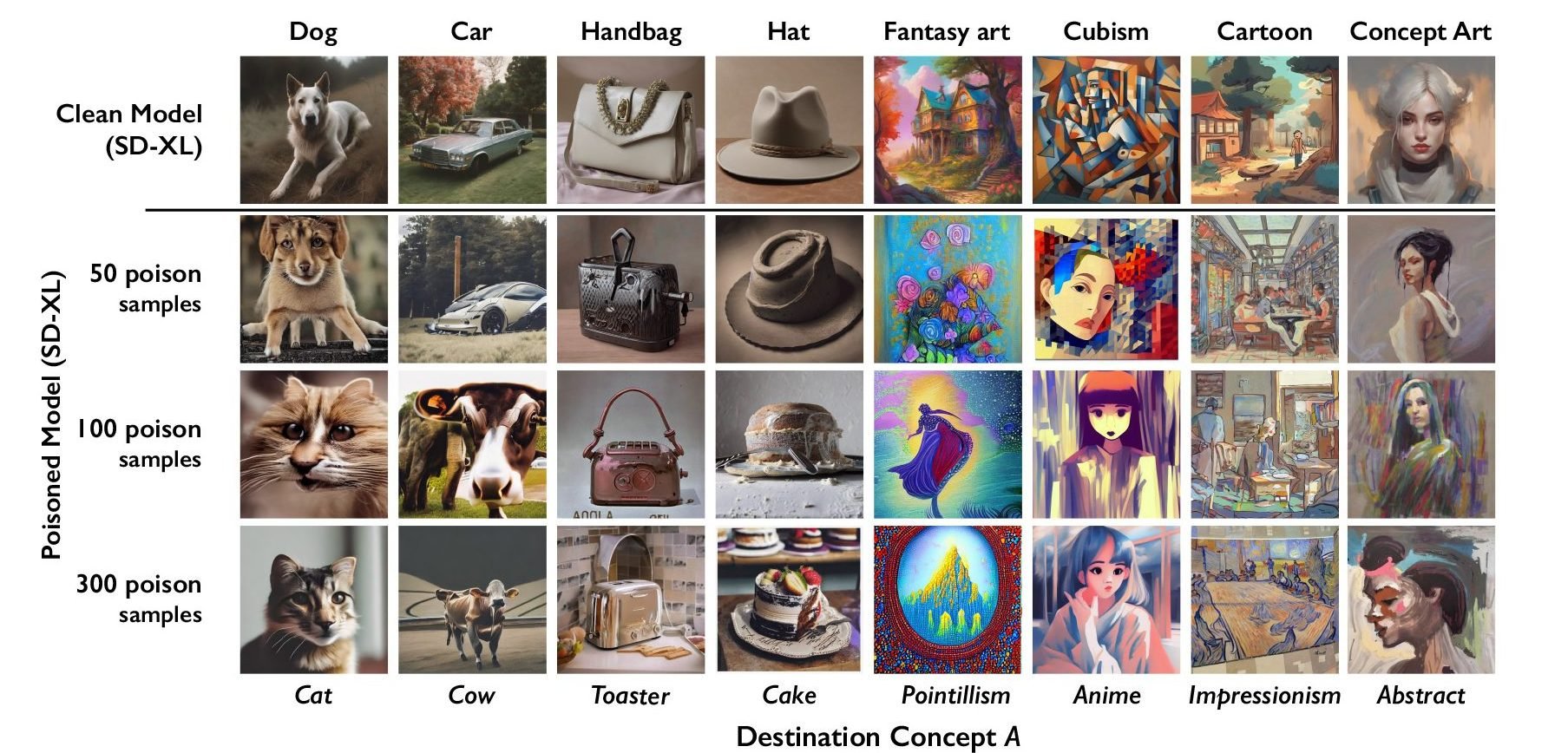

The researchers tested the attack on Stable Diffusion’s latest models and on an AI model they trained themselves from scratch. When they fed Stable Diffusion just 50 poisoned images of dogs and then prompted it to create images of dogs itself, the output started looking weird—creatures with too many limbs and cartoonish faces. With 300 poisoned samples, an attacker can manipulate Stable Diffusion to generate images of dogs to look like cats.

The only solution, if there is one, is to put your art on the blockchain and specifically license against it being used without attribution on same blockchain and the find some kind of license model that trickles value up the chain.

Even that won’t work, I suspect.

Stopped reading

Ha ha me too and I wrote it.

I’m very aware that there’s nothing to stop a bad actor from ignoring whatever is on the blockchain. But imagine removing all the web3/cryptobro bullshit that makes us all sick and instead just look at it as a record of who’s done what to which file. It could also be a centralised DB but it seems no one should have that power. A smart contract (aka ethereum) that says “anything derived from this sends some transactional fee up toward the originator”.

I mean I’m aware it won’t work.

I’m just saying that I can’t come up with anything better and so I also believe the battle is lost.